Best practices for developing meaningful measurement.

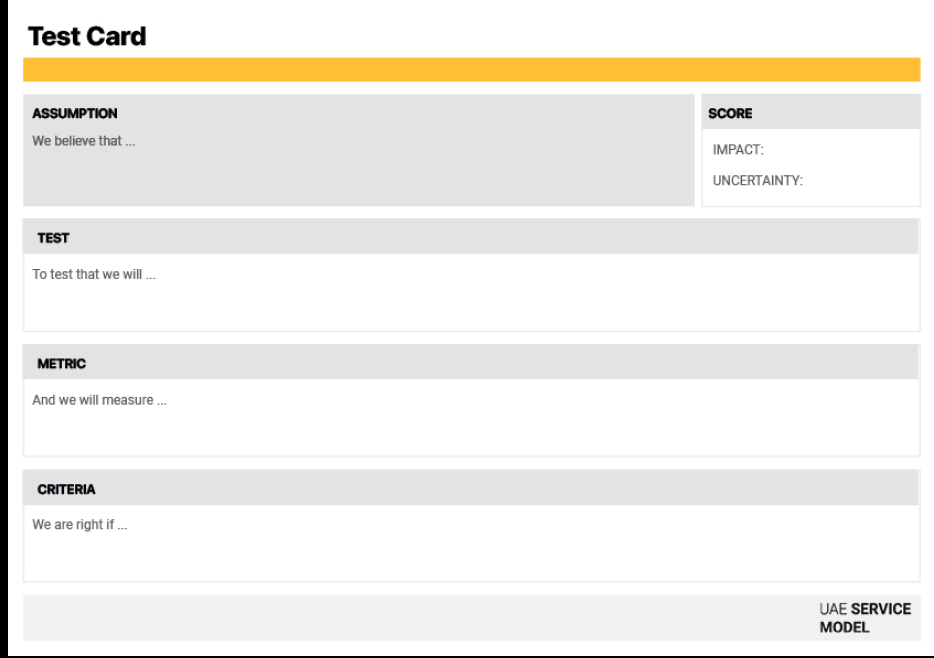

Test card

The test card helps teams validate assumptions and ideas quickly before investing significant time or resources into implementation.

Scope & Details

The Test Card helps teams validate assumptions before investing significant time or resources into building a full solution. It brings structure to experimentation by defining exactly what you are testing and how you will measure success. This tool ensures that teams don't just "try things out" randomly. By scoring Impact and Uncertainty, it forces you to prioritize the riskiest assumptions first. It moves the organization from "We think this will work" to "We have proof this works."

1 (planning), can vary per test

Beginner – Intermediate

Testing & validation

Concept card or prototype

Test Card template (digital or printed), prototypes or mock-ups, user feedback data, analytics tools, evaluation metrics

Service designers, product owners, research leads, data analysts, and decision-makers

How to do it

-

Define the assumption

Start by identifying the specific belief you want to validate.

"We believe that..." (e.g., "...simplifying the form will increase completion rates").

-

Score priority

Rate the assumption to decide if it's worth testing now.

IMPACT: If this assumption is true, how valuable is it? (High/Low)

UNCERTAINTY: How sure are we about this? (High Uncertainty = Test First).

-

Define the test

Describe the specific experiment you will run.

"To test that we will..." (e.g., "...run an A/B test on the live website for one week").

-

Select the metric

Define exactly what data you need to collect.

“And we will measure...” (e.g., “...the completion rate of both forms”).

-

Map Backstage Actions:

Define the pass/fail threshold to avoid moving goalposts later.

“We are right if...” (e.g., “...the short form has a completion rate 20% higher than the long form”).

-

Execution

The card is now your plan. The next step is to run the test, collect data, and record the result.

Assumption Generator: Feed your concept to an AI and ask: "What are the riskiest assumptions in this service concept? Turn them into 'We believe that...' statements."

Metric Suggestions: Ask an AI: "I want to test if users trust this new AI feature. What are 3 quantitative and 3 qualitative metrics I could use to measure 'Trust'?

Tips

If uncertainty is low, just build it. If impact is low, don't waste time testing it.

Low-fidelity prototypes or quick pilots often yield the fastest learning.

Avoid vague goals — tie each test to measurable outcomes.

Real user behavior provides more reliable insights than internal feedback.

Record your process and results to share learnings with the wider team.

Testing is not a one-time step but a continuous feedback loop for improving solutions.

The "Simplified Feedback" Test

Scenario: A team wants to increase the number of citizens submitting service feedback.

By forcing the team to set a strict pass/fail criterion (20%) upfront, the card transformed a subjective debate into a measurable experiment. This ensured the team only invested resources in the solution after proving it would deliver concrete value.

Related Design Principles

Our design principles that relate to service blueprint.